Code

library(tidyverse)

library(tidymodels)

library(finetune)

library(vip)With {xgboost}

Jessica Helmer

March 27, 2026

v5_dat_wide <- v5_dat |>

# condensing denominator neglect scores down to one score per item pair

mutate(.by = c(subject_id, item),

score = ifelse(task == "dn.c" | task == "dn.s",

mean(score),

score)) |>

unique() |>

select(-c(time, task)) |>

pivot_wider(names_from = "item", values_from = "score",

id_cols = c(subject_id, sscore)) |>

# alert alert !!

drop_na(){xgboost}Splitting data into train and test.

Specifying model (S-score predicted by everything else) and normalizing all predictors.

Specifying hyperparameters and which are going to be tuned.

Defining grid of candidate hyperparameter combinations.

# A tibble: 100 × 5

tree_depth min_n mtry sample_size learn_rate

<int> <int> <int> <dbl> <dbl>

1 5 12 43 0.808 0.00266

2 5 32 34 0.833 0.0236

3 5 17 29 0.576 0.0248

4 5 27 9 0.672 0.00443

5 5 9 21 0.899 0.0260

6 5 3 46 0.697 0.0129

7 5 16 76 0.646 0.0343

8 5 21 82 0.859 0.0102

9 5 37 61 0.687 0.00811

10 5 10 68 0.884 0.0413

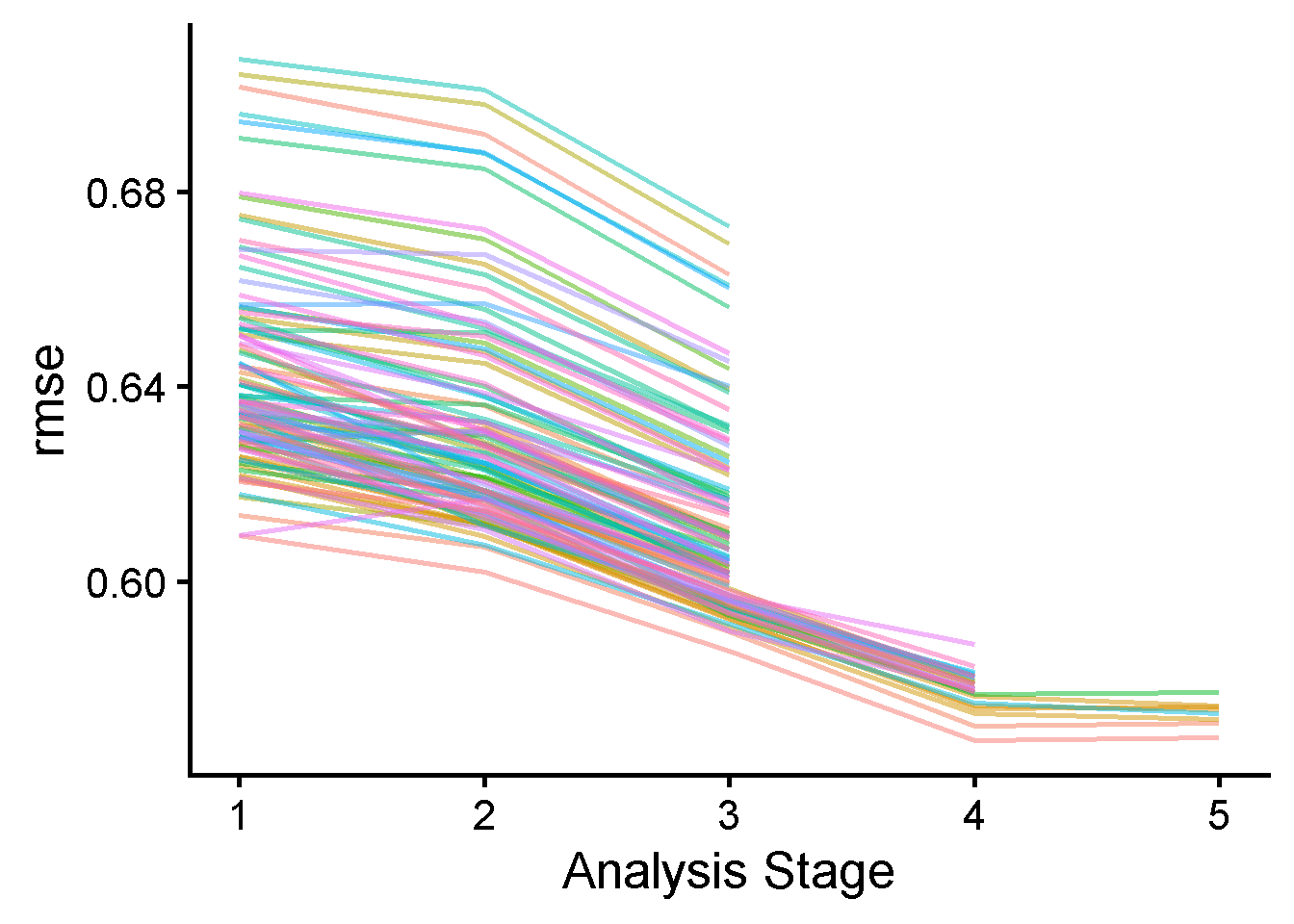

# ℹ 90 more rowsFive-fold cross-validation for tuning.

doParallel::registerDoParallel()

set.seed(234)

xgb_rs <- tune_race_anova(

xgb_wf,

v5_dat_folds,

grid = xgb_grid,

control = control_race(verbose_elim = TRUE))

if (!file.exists(here::here("Data", "xgboost"))) dir.create(here::here("Data", "xgboost"))

saveRDS(xgb_rs, here::here("Data", "xgboost", "xgb_rs.rds"))

Highest-performing hyperparameter combinations

# A tibble: 5 × 11

mtry min_n tree_depth learn_rate sample_size .metric .estimator mean n

<int> <int> <int> <dbl> <dbl> <chr> <chr> <dbl> <int>

1 1 20 8 0.0123 0.924 rmse standard 0.568 5

2 2 10 6 0.00774 0.667 rmse standard 0.571 5

3 7 20 7 0.00850 0.510 rmse standard 0.572 5

4 46 3 5 0.0129 0.697 rmse standard 0.573 5

5 5 18 5 0.00559 0.909 rmse standard 0.574 5

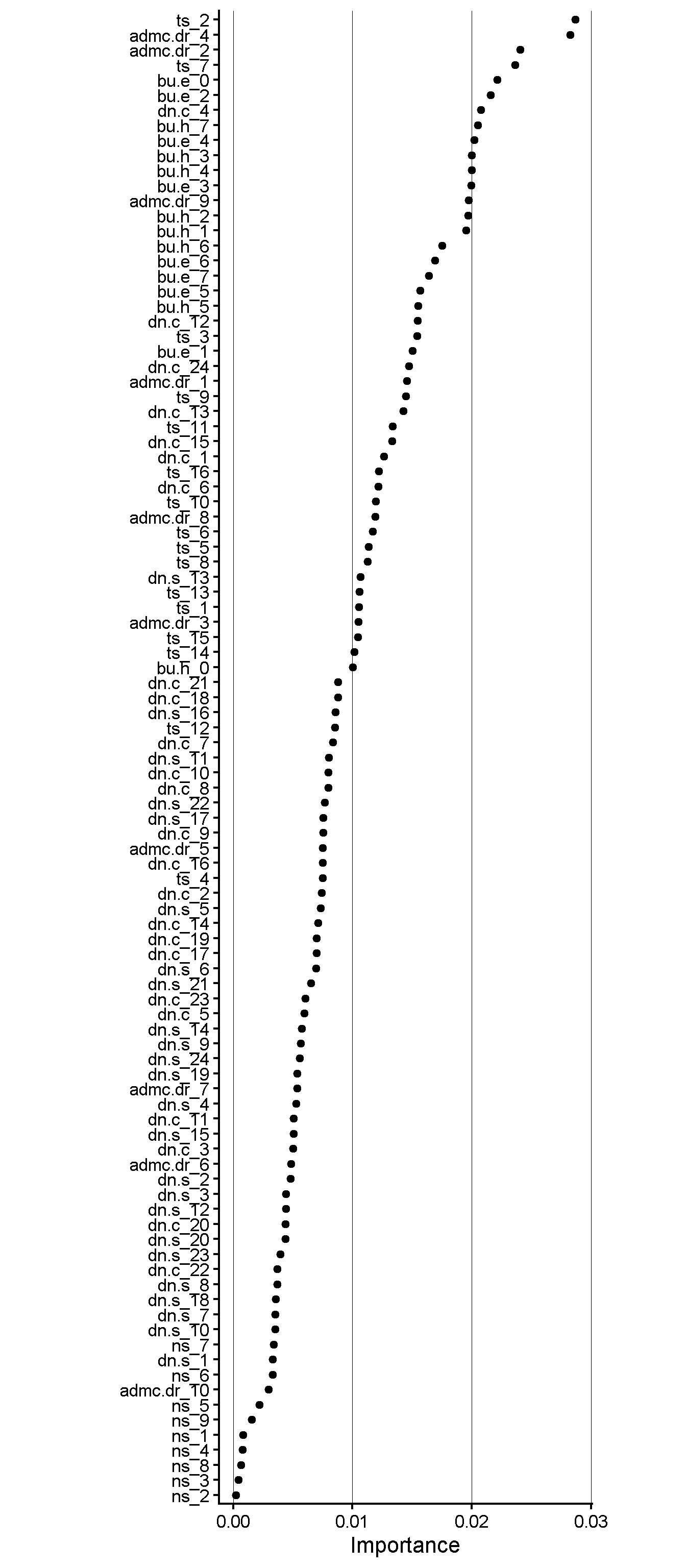

# ℹ 2 more variables: std_err <dbl>, .config <chr>Importance of items

extract_workflow(xgb_last) %>%

extract_fit_parsnip() |>

vi() |>

saveRDS(here::here("Data", "xgboost", "xgb_item-importance.rds"))

extract_workflow(xgb_last) %>%

extract_fit_parsnip() %>%

vip(geom = "point", num_features = 100) +

theme_classic(base_size = 16) +

theme(aspect.ratio = 4,

panel.grid.major.x = element_line(linewidth = 0.2))